AWS Big Data Blog

Reduce costs and increase resource utilization of Apache Spark jobs on Kubernetes with Amazon EMR on Amazon EKS

Amazon EMR on Amazon EKS is a deployment option for Amazon EMR that allows you to run Apache Spark on Amazon Elastic Kubernetes Service (Amazon EKS). If you run open-source Apache Spark on Amazon EKS, you can now use Amazon EMR to automate provisioning and management, and run Apache Spark up to three times faster. If you already use Amazon EMR, you can run Amazon EMR-based Apache Spark applications with other types of applications on the same Amazon EKS cluster to improve resource utilization and simplify infrastructure management.

Earlier this year, we launched support for pod templates in Amazon EMR on Amazon EKS to make it simpler to run Spark jobs on shared Amazon EKS clusters. A pod is a group of one or more containers, with shared storage and network resources, and a specification for how to run the containers. Pod templates are specifications that determine how each pod runs.

When you submit analytics jobs to a virtual cluster on Amazon EMR on EKS, Amazon EKS schedules the pods to execute the jobs. Your Amazon EKS cluster may have multiple node groups and instance types attached to it, and these pods could get scheduled on any of those Amazon Elastic Compute Cloud (Amazon EC2) instances. Organizations today have requirements to have better resource utilization, running jobs on specific instances based on instance type, amount of disk, disk IOPS, and more, and also control costs when jobs are submitted by multiple teams to a virtual cluster on Amazon EMR on EKS.

In this post, we look at support in Amazon EMR on EKS for Spark’s pod template feature and how to use that for resource isolation and controlling costs.

Pod templates have many uses cases:

- Cost reduction – To reduce costs, you can schedule Spark driver pods to run on EC2 On-Demand Instances while scheduling Spark executor pods to run on EC2 Spot Instances.

- Resource utilization – To increase resource utilization, you can support multiple teams running their workloads on the same Amazon EKS cluster. Each team gets a designated Amazon EC2 node group to run their workloads on. You can use pod templates to enforce scheduling on the relevant node groups.

- Logging and monitoring – To improve monitoring, you can run a separate sidecar container to forward logs to your existing monitoring application.

- Initialization – To run initialization steps, you can run a separate init container that is run before the Spark main container starts. You can have your init container run initialization steps, such as downloading dependencies or generating input data. Then the Spark main container consumes the data.

Prerequisites

To follow along with the walkthrough, ensure that you have the following resources created:

- An AWS account that provides access to AWS services

- An AWS Identity and Access Management User (IAM) user with an access key and secret key to configure the AWS Command Line Interface (AWS CLI), and permissions to create IAM roles, IAM policies, and AWS CloudFormation stacks

- An Amazon Simple Storage Service (Amazon S3) bucket to store your pod templates

- The AWS CLI, eksctl, and kubectl. Instructions for installation of these tools are given in Step 1

Solution overview

We look at a common use in organizations where multiple teams want to submit jobs and need resource isolation and cost reduction. In this post, we simulate two teams trying to submit jobs to the Amazon EMR on EKS cluster and see how to isolate the resources between them when running jobs. We also look at cost reduction by having the Spark driver run on EC2 On-Demand Instances while using Spark executors to run on EC2 Spot Instances. The following diagram illustrates this architecture.

To implement the solution, we complete the following high-level steps:

- Create an Amazon EKS cluster.

- Create an Amazon EMR virtual cluster.

- Set up IAM roles.

- Create pod templates.

- Submit Spark jobs.

Create an Amazon EKS cluster

To create your Amazon EKS cluster, complete the following steps:

- Create a new file (

create-cluster.yaml) with the following contents:

- Install the AWS CLI.

You can use version 1.18.157 or later, or version 2.0.56 or later. The following command is for Linux OS:

For other operating systems, see Installing, updating, and uninstalling the AWS CLI version.

- Install eksctl (you must have eksctl 0.34.0 version or later):

- Install kubectl:

- Create the Amazon EKS cluster using the create-cluster.yaml config file created in earlier steps:

This step launches an Amazon EKS cluster with five managed node groups: two node groups each for team-1 and team-2, one node group for Spark drivers using EC2 On-Demand capacity, while another one for Spark executors using EC2 Spot capacity.

After the Amazon EKS cluster is created, run the following command to check the node groups:

You should see a response similar to the following screenshot.

Create an Amazon EMR virtual cluster

We launch the EMR virtual cluster in the default namespace:

The command creates an EMR virtual cluster in the Amazon EKS default namespace and outputs the virtual cluster ID:

Note the ID of the EMR virtual cluster to use to run the jobs.

Set up an IAM role

In this step, we create an Amazon EMR Spark job execution role with the following IAM policy:

Navigate to the IAM console to create the role. Let’s call the role EMR_EKS_Job_Execution_Role. For more information, see Creating IAM roles and Creating IAM Policies.

Set up the trust policy for the role with the following command:

Enable IAM roles for service accounts (IRSA) on the Amazon EKS cluster:

Create pod templates with node selectors and taints

In this step, we create pod templates for the Team-1 Spark driver pods and Spark executor pods, and templates for the Team-2 Spark driver pods and Spark executor pods.

Run the following commands to view the nodes corresponding to team-1 for the label team=team-1-spark-driver:

Similarly, you can view the nodes corresponding to team-1 for the label team=team-1-spark-executor. You can repeat the same commands to view the nodes corresponding to team-2 by changing the role labels:

You can constrain a pod so that it can only run on particular set of nodes. There are several ways to do this and the recommended approaches all use label selectors to facilitate the selection. In some circumstances, you may want to control which node the pod deploys to; for example, to ensure that a pod ends up on a machine with an SSD attached to it, or to co-locate pods from two different services that communicate a lot into the same availability zone.

nodeSelector is the simplest recommended form of node selection constraint. nodeSelector is a field of PodSpec. It specifies a map of key-value pairs. For the pod to be eligible to run on a node, the node must have each of the indicated key-value pairs as labels.

Taints are used to repel pods from specific nodes. Taints and tolerations work together to ensure that pods aren’t scheduled onto inappropriate nodes. One or more taints are applied to a node; this marks that the node shouldn’t accept any pods that don’t tolerate the taints. Amazon EKS supports configuring Kubernetes taints through managed node groups. Taints and tolerations are a flexible way to steer pods away from nodes or evict pods that shouldn’t be running. A few of the use cases are dedicated nodes: If you want to dedicate a set of nodes, such as GPU instances for exclusive use by a particular group of users, you can add a taint to those nodes, and then add a corresponding toleration to their pods.

nodeSelector provides a very simple way to attract pods to nodes with particular labels. Taints on the other hand are used to repel pods from specific nodes. You can apply taints to a team’s node group and use pod templates to apply a corresponding toleration to their workload. This ensures that only the designated team can schedule jobs to their node group. The label, using affinity, directs the application to the team’s designated node group and a toleration enables it to schedule over the taint. During the Amazon EKS cluster creation, we provided taints for each of the managed node groups. We create pod templates to specify both nodeSelector and tolerations to schedule work to a team’s node group.

- Create a new file

team-1-driver-pod-template.yamlwith the following contents:

Here, we specify nodeSelector as team: team-1-spark-driver. This makes sure that Spark driver pods are running on nodes created as part of node group team-1-spark-driver, which we created for Team-1. At the same time, we have a toleration for nodes tainted as team-1.

- Create a new file

team-1-executor-pod-template.yamlwith the following contents:

Here, we specify nodeSelector as team: team-1-spark-executor. This makes sure that Spark executor pods are running on nodes created as part of node group team-1-spark-executor, which we created for Team-1. At the same time, we have a toleration for nodes tainted as team-1.

- Create a new file

team-2-driver-pod-template.yamlwith the following contents:

Here, we specify nodeSelector as team: team-2-spark-driver. This makes sure that Spark driver pods are running on nodes created as part of node group team-2-spark-driver, which we created for Team-2. At the same time, we have a toleration for nodes tainted as team-2.

- Create a new file

team-2-executor-pod-template.yamlwith the following contents.

Here, we specify nodeSelector as team: team-2-spark-executor. This makes sure that Spark executor pods are running on nodes created as part of node group team-2-spark-executor, which we created for Team-2. At the same time, we have a toleration for nodes tainted as team-2.

Save the preceding pod template files to your S3 bucket or refer to them using the following links:

s3://aws-data-analytics-workshops/emr-eks-workshop/scripts/team-1-driver-template.yamls3://aws-data-analytics-workshops/emr-eks-workshop/scripts/team-1-executor-template.yamls3://aws-data-analytics-workshops/emr-eks-workshop/scripts/team-2-driver-template.yamls3://aws-data-analytics-workshops/emr-eks-workshop/scripts/team-2-executor-template.yaml

Submit Spark jobs

In this step, we submit the Spark jobs and observe the output.

Substitute the values of the EMR virtual cluster ID, EMR_EKS_Job_Execution_Role ARN, and S3_Bucket:

Submit the Spark job:

After submitting the job, run the following command to check if the Spark driver and executor pods are created and running:

You should see output similar to the following:

Let’s check the pods deployed on team-1’s On-Demand Instances:

Let’s check the pods deployed on team-1’s Spot Instances:

When the executor pods are running, you should see output similar to the following:

To check the status of the jobs on Amazon EMR console, choose the cluster on the Virtual Clusters page. You can also check the Spark History Server by choosing View logs.

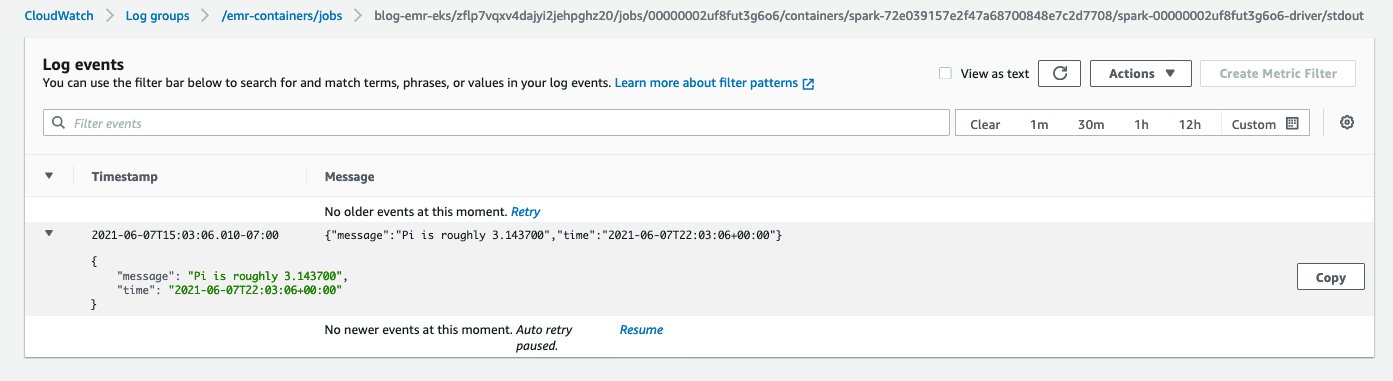

When the job is complete, go to Amazon CloudWatch Logs and check the output by choosing the log (/emr-containers/jobs/<<xxx-driver>>/stdout) on the Log groups page. You should see output similar to the following screenshot.

Now submit the Spark job as team-2 and specify the pod template files pointing to team-2’s driver and executor pod specifications and observe where the pods are created:

We can check the status of the job on the Amazon EMR console and also by checking the CloudWatch logs.

Now, let’s run a use case where Team-1 doesn’t specify the correct toleration in the Spark driver’s pod template. We use the following pod template. As per the toleration specification, Team-1 is trying to schedule a Spark driver pod on nodes with label team-1-spark-driver and also wants it to get scheduled over nodes tainted as team-2. Because team-1 doesn’t have any nodes with that specification, we should see an error.

Submit the Spark job using this new pod template:

Run the following command to check the status of the Spark driver pod:

Let’s describe the driver pod to check the details. You should notice a failed event similar to Warning FailedScheduling 28s (x3 over 31s) default-scheduler 0/17 nodes are available: 8 node(s) had taint {team-1: general-purpose}, that the pod didn't tolerate, 9 node(s) didn't match Pod's node affinity

This shows that if the pod template doesn’t have the right tolerations, the tainted nodes don’t tolerate the pod and don’t schedule over those nodes.

Clean up

Don’t forget to clean up the resources you created to avoid any unnecessary charges.

- Delete all the virtual clusters that you created:

- Delete the Amazon EKS cluster:

- Delete the

EMR_EKS_Job_Execution_Rolerole and policies.

Summary

In this post, we saw how to create an Amazon EKS cluster, configure Amazon EKS managed node groups, create an EMR virtual cluster on Amazon EKS, and submit Spark jobs. With pod templates, we saw how to manage resource isolation between various teams when submitting jobs and also learned how to reduce cost by running Spark driver pods on EC2 On-Demand Instances and Spark executor pods on EC2 Spot Instances.

To get started with pod templates, try out the Amazon EMR on EKS workshop or see the following resources:

- Use Kubernetes Pod Templates with Amazon EMR on Amazon EKS to simplify running Spark workloads and control costs

- Using pod templates

- Pyspark Job submission

About the Author

Saurabh Bhutyani is a Senior Big Data specialist solutions architect at Amazon Web Services. He is an early adopter of open source Big Data technologies. At AWS, he works with customers to provide architectural guidance for running analytics solutions on Amazon EMR, Amazon Athena, AWS Glue, and AWS Lake Formation

Saurabh Bhutyani is a Senior Big Data specialist solutions architect at Amazon Web Services. He is an early adopter of open source Big Data technologies. At AWS, he works with customers to provide architectural guidance for running analytics solutions on Amazon EMR, Amazon Athena, AWS Glue, and AWS Lake Formation